The Physics Behind the AI Trade

Why Thermodynamics, Not Narratives, Decides Where Value Accrues in the AI Trade

A Physics first lens to playing the AI trade

Physics is the baseline for the natural world, and also the absolute ceiling for any problem we solve (including artificial intelligence.) Everything above the ‘physics layer’ are human choices that can theoretically be changed. Confusing human choices for physics laws is what most get wrong.

The analysis that follows is grounded in the constraints and opportunities physics imposes. Hard science, not media narratives, will decide how to find the winning opportunities in the AI trade.

The physics that follows exists to serve the investment thesis, not academic completeness. The concepts most applicable are chosen to highlight the constraints, build the analytical foundation, and establish a new lens to evaluate the AI trade.

This framework also serves as a filter to fact-check every tall claim about AI disruption. physics is the reality check that prevents costly mistakes born of following the crowd rather than grounding analysis in reality.

The Physics behind the AI trade

At its core, the entire AI lifecycle from inception to end is governed by five primary physical fundamentals. Each manifests at various layers of the AI assembly line.

The law of Thermodynamics (Landauer’s Limit and Heat Dissipation)

The law of speed of light

The principles of Quantum Mechanics

The laws of information theory (Shannon’s theorem)

The law of Wave physics (Diffraction Limit)

Thermodynamics is already the dominant cost driver in AI infrastructure. The energy and cooling buildout it demands is leading capital allocation decisions across every stakeholder in the race to dominate AI. (1)

Data compiled by Goldman Sachs shows the five largest hyperscalers in the US will deploy a cumulative $736B in Capex for the AI buildout in 2025 and 2026 alone. Going further, McKinsey has projected a total investment of ~$5.2 trillion for AI data centres by 2030. (2)

Of that $5.2 trillion, McKinsey estimates $1.3 trillion flows directly into the energisers; the utilities, cooling system manufacturers, and electrical infrastructure providers whose entire business exists to solve the heat and power problem thermodynamics creates. This is the thermodynamics trade expressed in dollars.

The IEA projects the total demand for energy from data centres to double from ~415 TWh in 2024 to ~945 TWh by 2030, this is the equivalent to adding the entire electricity consumption of Japan in 2024 to the global grid in just six years. These figures establish the scale of what thermodynamics demands in capital terms, and the physics below explains why this demand is permanent. (3, 4)

Thermodynamics imposes physical energy constraints on chip compute capabilities

If you strip away AI to the precise point where physics shows up, it boils down to a single factor: transistors switching states. Billions of times per second, per chip. Those switching events are what execute the matrix multiplications underlying every model, and every switching event costs energy and generates heat.

Every compute operation is subject to the constraints imposed by the second law of thermodynamics. A constraint neither engineering nor software can solve. This is nature imposing its will on every transistor switch on every chip at every data centre ever built by humanity.

The physical law creates two distinct constraints on compute, one theoretical, and one practical. Understanding both builds the foundation for our thesis that the energy infrastructure trade isn’t cyclical capex but a permanent structural requirement.

Constraint 1: Landauer’s Limit - The Theoretical Floor of Compute

First introduced in 1961, the Landauer’s limit provides the baseline of the minimum quantity of heat dissipated in the environment to erase a single bit of information. At room temperature (~300K), the minimum heat released is 2.8 × 10⁻²¹ joules per bit operation. This is thermodynamics enforcing absolute limits no material, architecture, optimization or engineering can go beyond. This is the theoretical floor of all compute, including AI.

Peer reviewed literature on this topic argues that modern chips release roughly a million times more energy per logic operation which exceeds the Landauer’s limit by many orders of magnitude. (5)

While this sets the physics enforced ceiling on compute per watt, a better lens to understand this is backed by solid research published by Ho, Erdil & Besiroglu (2023) which ignores the Landauer floor and instead tackles the more important and immediate question, “how much more efficient can silicon transistors realistically get before hitting their own engineering limits?” (6)

The current state of the art CMOS architectures, the transistor-based chip designs running today’s AI GPUs are operating ~207x above the maximum efficiency Ho et al’s research outlines. In other words, there is capacity to improve chip efficiency to the order of 207x before we hit the practical limits of how much energy per logic operation these chips will consume.

While this may sound a lot, current AI compute demand is scaling 4-5x per year, and all the efficiency gains are consumed by demand growth before they can actually reduce energy infrastructure requirements. This explains the massive grid capex and hyperscaler expansion efforts in line with the estimates and data compiled by McKinsey and Goldman Sachs outlined above. (7)

Constraint 2: Heat Dissipation - The practical constraint of compute

Landauer’s principle is evidence of physics imposing its will on compute. Every switch event is generating heat as a consequence of executing logic operations within the constraints of physics.

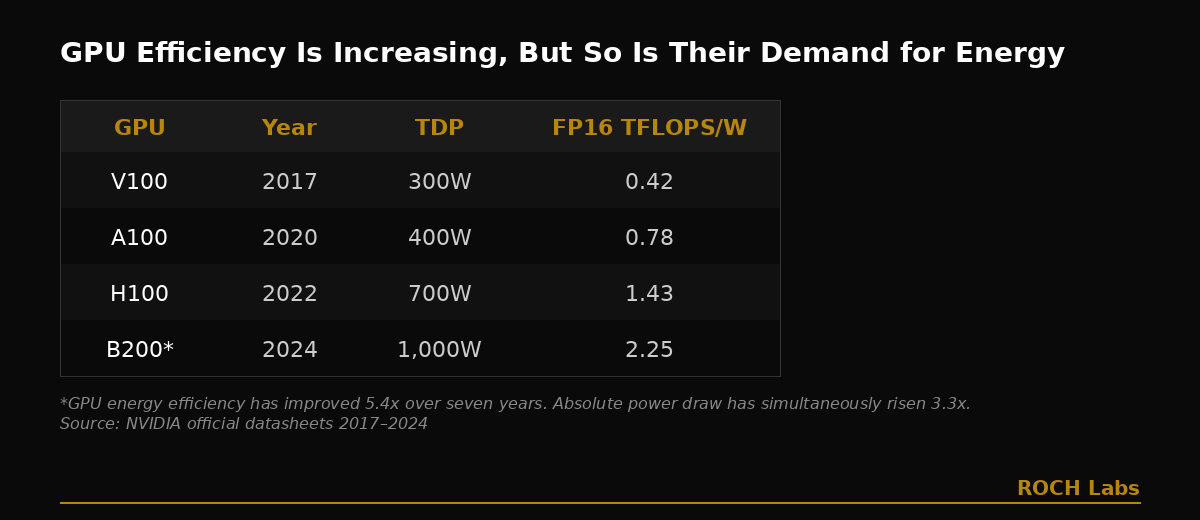

Take the Nvidia H100 for instance, which draws ~700W per chip. The next-gen GB200 NVL72 rack draws nearly 120kW for 72 Blackwell GPUs, in comparison, a single H100 rack consumes 10-30kW. This is 4-12x increase in rack power density in a single generation of GPU offerings. This increase across generations isn’t a one-time thing either, mapping the energy consumption across four generations of chips starting with V100 (~300W in 2017), A100 (~400W in 2020), H100 (~700W in 2022), and the latest generation of GB200 (~1,000W in 2024) shows how each generation demands meaningfully more power and cooling than the last. (8)

Note: Citations 9-12 links to the original source material for the table data above.

At these rates of power consumption, liquid cooling isn’t just optional infrastructure, it is mandatory, and scales permanently with every watt deployed.

To sum up, Landauer’s limit establishes the physical cost of compute which forces generation and consumption of electricity, and heat dissipation mandates cooling infrastructure as heat scales permanently with compute. These aren’t cyclical capex driven by a temporary infrastructure gap. They are structural requirements imposed by physics.

Thermodynamics has already decided what the AI infrastructure stack must look like. The next question is who builds it, who powers it, and who profits from it. In Part 2, I'll map the exact companies whose business models exist to solve the physical constraints outlined above. The investment case for each one is anchored in physical laws that don’t change regardless of who wins the model race. Stay tuned!

If you found this useful, subscribe below. Part 2 drops next week.

Citations

Goldman Sachs Research, "How AI Is Transforming Data Centers and Ramping Up Power Demand," July 16, 2024.

Jesse Noffsinger et al., "The cost of compute: A $7 trillion race to scale data centers," McKinsey Quarterly, April 2025.

International Energy Agency, "Energy and AI — Energy Demand from AI," April 2025.

Enerdata via Statista, "Electricity consumption worldwide in 2024, by leading country," July 2025.

Chattopadhyay, P., Misra, A., Pandit, T., and Paul, G., "Landauer Principle and Thermodynamics of Computation,", June 2025.

Ho, A., Erdil, E., and Besiroglu, T., "Limits to the Energy Efficiency of CMOS Microprocessors," 2023 IEEE International Conference on Rebooting Computing, December 2023. . H100 today achieves ~1.4×10¹² FP16 FLOP/J. The estimated maximum CMOS efficiency ceiling is ~2.9×10¹⁴ FP16/J. That gap is approximately 207x.

Sevilla, J. and Roldán, E., "Training Compute of Frontier AI Models Grows by 4-5x Per Year," Epoch AI, May 28, 2024.

NVIDIA Corporation, "DGX GB200 User Guide — Hardware," NVIDIA Documentation, August 2025.

NVIDIA Corporation, “NVIDIA V100 Tensor Core GPU Datasheet,” January 2020.

NVIDIA Corporation, "NVIDIA A100 Tensor Core GPU Datasheet," May 2022.

NVIDIA Corporation, "NVIDIA H100 GPU — Product Specifications," accessed February 2026.

Lenovo, "ThinkSystem NVIDIA HGX B200 180GB 1000W GPU Product Guide," Lenovo Press, LP2226, January 13, 2026. https://lenovopress.lenovo.com/LP2226 — citing NVIDIA GPU specifications, Table 2.