The Wattage Waterfall of Compute

A single GB200 NVL72 rack draws ~120kW. A standard office building draws ~50-100kW. One AI training rack consumes more power than the entire building you're sitting in.

In Part 1 we established that every logic operation costs energy and generates heat, a constraint thermodynamics imposes permanently.

Today we follow that heat all the way up the stack; from a single chip to a full-scale data center running AI workloads around the clock.

The Wattage Waterfall

A single logic operation at the level of a chip generates heat that demands cooling. A cluster of ten thousand chips demand a power grid. A training run demands that the grid run without interruption for weeks, and once a model deploys, inference demand runs the grid forever.

The Chip: The micro-unit of compute

A single H100 draws 700W of power at peak. One H100 server (8 GPUs) draws 10.2kW of total system power. A single GB200 NVL72 rack - Nvidia’s current Blackwell configuration draws ~120kW. A standard office building draws roughly 50-100 kW which means one AI training rack consumes more power than an entire office building. (1), (2), (3)

The Cluster: Where scale meets physics

A frontier model training cluster runs 10k to 25k+ GPUs simultaneously. At 700W per H100, a 10k GPU cluster draws 7MW continuously. xAI’s colossus cluster in Memphis is ~100,000 H100s drawing ~150-200 MW continuously. (1), (4), (5)

The training run level: The wattage it takes to bring GPT-4 to life

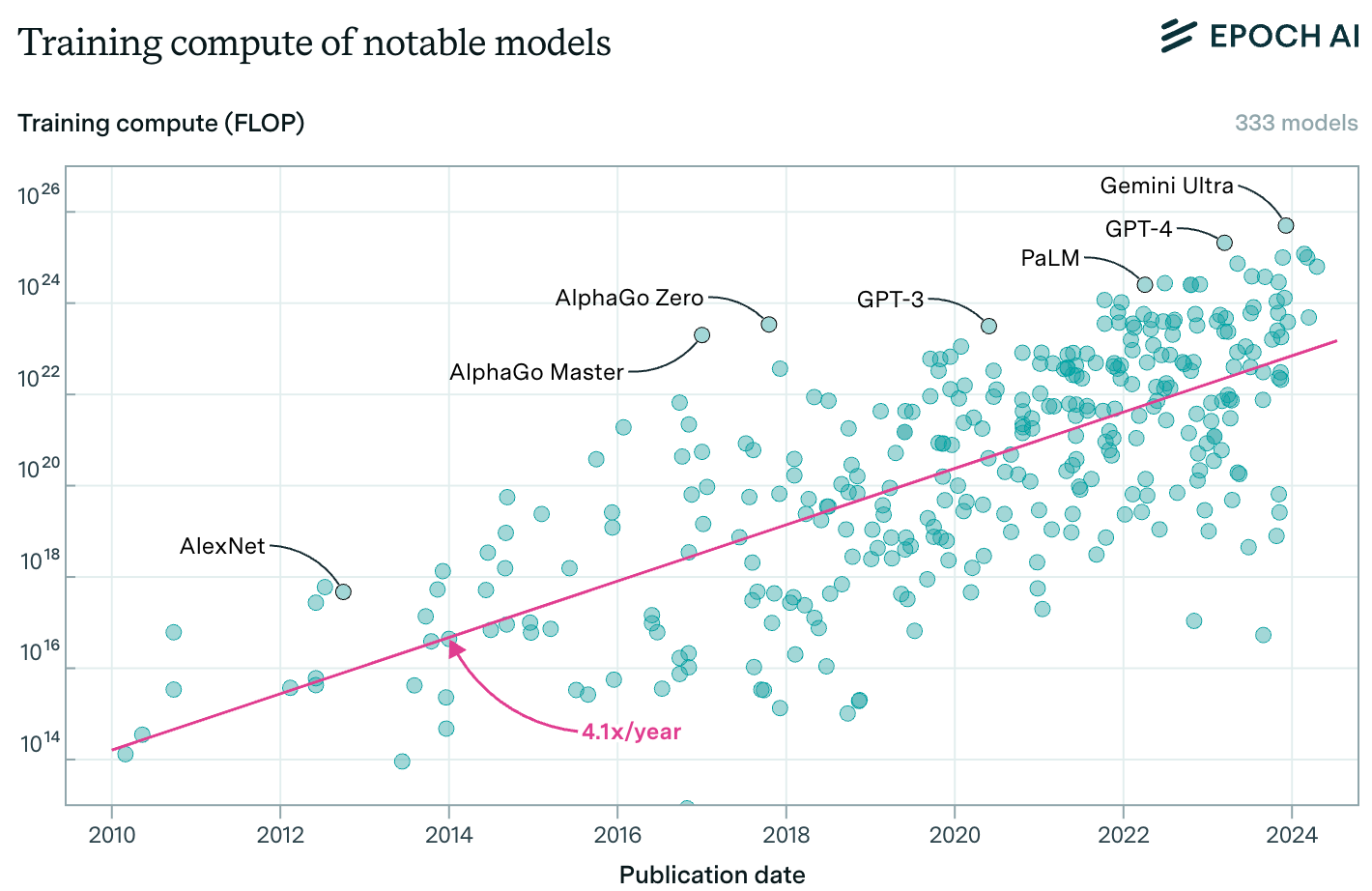

GPT-4 is estimated to consume ~50 GWh of power for training. This is equivalent to powering ~4,800 average US homes for a full year. For xAI’s Grok 4, estimates show ~310 GWh of power consumed for training, a 5-6x increase in magnitude over GPT-4. As models become bigger, the training compute will continue to expand, and Epoch AI estimates training compute to scale ~4x per year and by extension the energy cost of training scales at roughly the same rate. (5), (6), (7), (8)

The inference level: Where energy demand becomes permanent

OpenAI CEO Sam Altman disclosed in a June 2025 blog post that a single ChatGPT query uses ~0.34 watt-hours. Data compiled by Epoch AI independently corroborated Altman’s own estimates and calculates ~0.3 Wh per query. Altman equated this to an oven in usage for a little over one second, or a high-efficiency lightbulb in use for a couple of minutes in his blog. (6), (9), (10)

At 2.5B daily queries (or prompts) reported by TechCrunch citing OpenAI in July 2025 allows us to infer that the daily energy demand from inference alone costs 0.34 x 2.5B watt hours of electricity. In other words, ~850 MWh per day, which works to an annual run rate of 850 MWh x 365, ~310 GWh per year. (11)

As noted above, GPT-4 model training consumed ~50 GWh of electricity. A simple division shows that inference would consume roughly the equivalent of the electricity consumed in the overall training process in ~59 days (50,000 MWh ÷ 850 MWh/day = ~59 days). MIT further states that Inference’s share of the total AI lifecycle energy is ~80-90%, and reasoning models (o1/o3-class models) only escalate this consumption as data from Epoch AI shows 2.5x more tokens consumed by reasoning models over regular models. (6), (10)

The Data Center Level: Where it all aggregates

IEA estimates global data center energy consumption to double from ~415 TWh (c.1.5% of global electricity) to 945 TWh as the base case between 2024 and 2030. For context, this is equivalent to the entire current annual electricity consumption of Japan. A Goldman Sachs report further adds that data centers will command 8% of the total US power consumption by 2030 against ~3% in 2022. This massive demand for power explains the approx. $720B grid spending estimates by 2030 by Goldman Sachs, and an estimated ~$736B capex announcements by the top 5 hyperscalers in 2025-26 alone as outlined above. (12), (13)

Conclusion

The wattage waterfall makes the constraints of physics (thermodynamics) structural requirements. This brings us to the three primary conclusions relevant for all investors trying to build a foundational understanding of the AI trade.

First, power must be generated. Second, that power must be effectively transmitted across the racks, and third, these AI workloads will generate significant heat which must be removed from the environment.

These three requirements not only mandate large upfront investments in the build-out for power, grid, and cooling infrastructure; but also force them as structural, not cyclical requirements irrespective of who wins the model race.

Now that we’ve explained the constraints of thermodynamics in Part 1, and the current energy & cooling requirements for SOTA chips powering massive AI workloads, the next question is who builds it, who powers it, and who profits from it.

In Part 3, I'll map the exact companies whose business models exist to solve the physical constraints outlined above. The investment case for each one is anchored in physical laws that don’t change regardless of who wins the model race. Stay tuned!

If you found this useful, subscribe. Part 3 drops next week.

Citations

NVIDIA Corporation, "NVIDIA H100 GPU — Product Specifications," accessed February 2026.

NVIDIA Corporation, "NVIDIA DGX H100 Datasheet," March 2022. Document number A4-2146027-R3.

NVIDIA Corporation, "DGX GB200 NVL72 Datasheet," NVIDIA Documentation, accessed March 2026.

Pilz, K., Rahman, R., Sanders, J. and Heim, L., "AI Training Cluster Sizes Increased by More Than 20x Since 2016," Epoch AI, October 23, 2024.

James Sanders, Luke Emberson and Yafah Edelman, "What did it take to train Grok 4?" Epoch AI, September 12, 2025.

James O'Donnell and Casey Crownhart, "We did the math on AI's energy footprint," MIT Technology Review, May 20, 2025.

U.S. Energy Information Administration, "Electricity Use in Homes," updated December 18, 2023. Derived estimate: 50 GWh ÷ 10,500 kWh/household/year = ~4,762 households. Rounded to ~4,800 in text.

Sevilla, J. and Roldán, E., "Training Compute of Frontier AI Models Grows by 4-5x Per Year," Epoch AI, May 28, 2024.

Sam Altman, “The Gentle Singularity,” blog.samaltman.com, June 11, 2025.

You, J., "How much energy does ChatGPT use?" Epoch AI, February 7, 2025.

Dastin, J., “ChatGPT users send 2.5 billion prompts a day,” TechCrunch, July 21, 2025.

International Energy Agency, “Energy and AI — Energy Demand from AI,” IEA, April 10, 2025. https://www.iea.org/reports/energy-and-ai/energy-demand-from-ai

Goldman Sachs Research, "AI to drive 165% increase in data center power demand by 2030," Feb 4, 2025.